Martijn van der Does

Executive Design Director

2 Dec 2024, 7 min

Martijn van der Does

Executive Design Director

2 Dec 2024, 7 min

Introduction

In this issue, explore a curated selection of insights, including our deep dive into Dynamic User Experiences (DUX), crafted to enhance your understanding of how AI, data, and design can transform the future of user interfaces.

In recent years, the tech world has buzzed with excitement over generative interfaces and AI-assisted design. Prominent voices, including investment groups like Andreessen Horowitz (a16z), herald these technologies as revolutionary for UI/UX design. But how close are these promises to reality? This article explores the practical applications, limitations, and potential of generative interfaces, arguing that while they offer exciting possibilities, their true value lies in augmenting human creativity rather than replacing it.

Specifically, we'll be looking at Dynamic User Experiences (DUX), a promising new design paradigm integrating technologies such as artificial intelligence with established best practices. DUX refers to interfaces that adapt in real-time to user needs, context, and behavior, offering a more responsive and personalized user experience.

The concept of AI as a design copilot, as proposed by Li and Li of a16z, is appealing but oversimplifies the design process. They claim that "for a designer, the process of fleshing out design becomes less about pixel manipulation and more about ideating." However, this view underestimates the nuanced nature of design work.

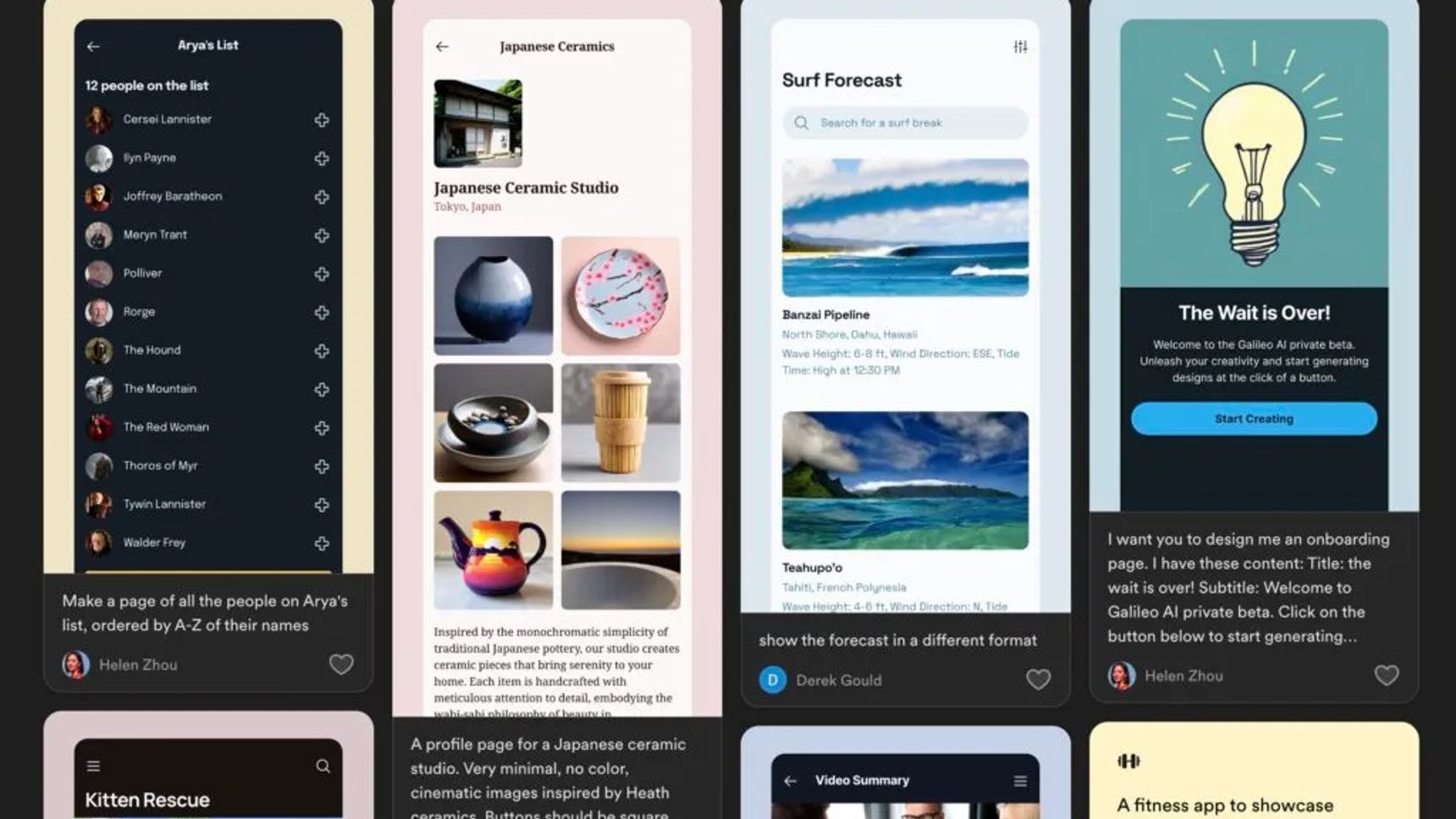

While more specialized tools like Galileo AI produce maybe better results, they still lack the soul and uniqueness that experienced designers value.

Treating design as a code to crack or a formula to solve overlooks the essence of good design. Design requires a level of nuance that a copilot, at least for now, is simply incapable of. Li and Li inadvertently acknowledge this when discussing the challenges of translating design into code. One such challenge is getting experienced designers to accept a copilot in the first place.

More senior designers value speed, control, and uniqueness. While AI excels at speed, it often lacks control and uniqueness. For experienced designers, these tools can be more of a barrier than a help. But there's potential in reimagining these tools as translators rather than copilots. In that role, they can:

This approach aligns with the growing trend of "design engineers" who work at the intersection of code and design. While these professionals may not need translators, for many teams, generative UI tools can bridge the gap between design and non-design professionals, fostering better collaboration in product development.

For example, at a leading tech company, an AI-powered tool helped product managers create rough mockups of their ideas, which designers could then refine and perfect. This approach significantly reduced miscommunication and accelerated the design process.

Now that we've established the role of some of the AI tools (I feel like new ones are being released every day) in the design process, let's explore how they can contribute to the creation of Dynamic User Experiences.

While generative UI tools may not revolutionize the design process itself, they do hold significant potential for creating DUX.

One proponent of such a system is Fast Company guest writer Peter Smart. In his own words, he describes his vision of DUX components as "based on blocks—small, modular components of an experience, whether content, function, or User Interface (UI)—that can be dynamically assembled and adjusted in real-time by AI." (Smart, Fast Company, June 9th, 2024)

This concept follows the modular approach of tools like Galileo AI but avoids their misunderstanding of design workflows and details. Indeed, though these simple, stackable components may seem limiting for creating UI, they're ideal for dynamically remaking and adjusting interfaces. To implement DUX in such a way requires:

This approach allows for interfaces that are both feature-rich and intuitively simple. It's a delicate balance: the UI must be flexible enough to adapt to user needs, yet consistent enough to remain learnable and familiar.

This balance can then produce a fluid, intuitive design language. It's not about eliminating all friction, but about creating interfaces that feel natural and responsive to user needs. Such interfaces can dramatically improve the user experience by presenting only what's needed at any given moment, adapting to the user's context and goals without depriving them of vital anchor points.

Building on the concept of DUX, let's explore how we can create intuitive interfaces from complex systems.

At Hypersolid, we have an empowering vision that guides our design teams:

“Simplify complexity. Unite design, technology, and data to fuel creativity”

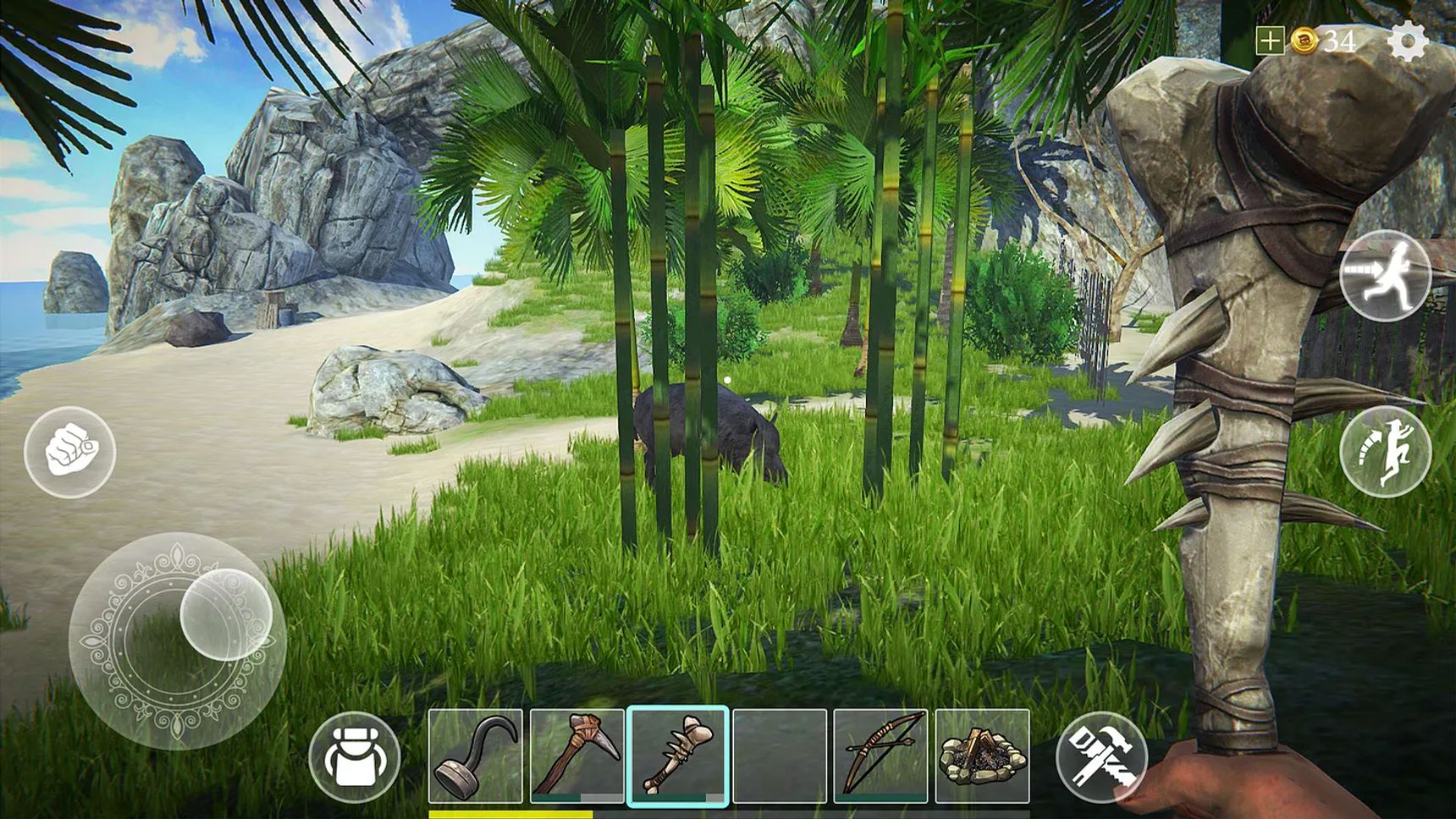

Creating simple, intuitive interfaces often requires complex underlying systems. Video games provide excellent examples of this principle in action. As noted by the UX Collective: "Videogames are notoriously famous for keeping the interface simple while relying on visual hints and low-consequential tutorials to progressively introduce mechanics and more complex operations."

This approach - presenting only what's necessary at any given moment - is at the heart of effective DUX. It requires careful consideration of user needs, context, and cognitive load. The UX Collective emphasizes that "less is only more in design when nothing vital is missing, and nothing irrelevant is over-emphasized." This principle is crucial for DUX implementation.

Another example of simplifying complexity can be seen in modern smart home systems. Despite controlling a variety of complex devices and functions, the best interfaces present users with simple, intuitive controls that adapt based on time of day, user preferences, and other contextual factors.

Careful consideration of user needs, context, and cognitive load are what sets effective minimalism apart from wasted space. Thus, a well-designed dynamic interface should feel natural and intuitive to users, automatically adapting to their needs without causing confusion or disorientation. Maintaining this seamless experience requires meticulous attention to detail.

The power of DUX lies in its ability to codify and consistently manifest user comfort across a dynamic layout. By intelligently presenting and hiding UI elements based on context, DUX can provide a rich, feature-full experience without overwhelming the user.

This balance of simplicity and complexity is what makes DUX so powerful - and so challenging to implement effectively. It requires not just technological sophistication, but a deep understanding of user psychology and behavior.

While the potential of DUX is exciting, it's crucial to address the challenges that come with its implementation. Let's look at three significant hurdles:

By June 2025, all large-scale digital products and services must comply with the new Digital Accessibility Act (DAA). To guarantee compliance with upcoming DAA standards, all the components and combinations of DUX would need to account for factors such as proper contrasts, correct naming of links & buttons, usage of labels, defining all language elements, and ensuring there are no empty buttons. When this has been properly accounted for, interactions should also properly respect user privacy.

It's extremely tempting for both individual designers and companies to use all of DUX's data points to profile users. Many, if not most, will prove unable to resist such temptations, potentially unleashing a new wave of dark patterns and stalker-like behavior by platform owners.

So the practical desirability and viability of DUX depends as much on robust user protection frameworks as it does on practical implementation. So you've got accessibility and privacy covered? Great! Now you need to make sure that your target audience's devices can actually run your DUX.

Generative AI is very resource-intensive. A mobile phone needs at least 8GB of RAM to comfortably run the lighter local models currently available.

Generative DUX may sound exciting, but it’s not practical yet. Only high-end smartphones with 8GB of RAM or more can handle it, and even many laptops and desktops struggle with the demands of these AI models. Even on powerful devices, generative AI still has too many errors, and as always, the details matter.

To address these challenges, some companies are exploring edge computing solutions and developing more efficient AI models. However, these solutions are still in their early stages and require further development before they can be widely implemented.

Despite these challenges, the future of UI design is promising. Balancing accessibility, policy adherence, and technical limitations is a constant act, but it's one we can master. Like all previous design paradigms, the success of DUX hinges on thoughtful implementation that augments, rather than replaces, human creativity.

The future of user experiences and interfaces lies not per se in AI copilots but in AI-powered translators and assemblers “agents”. Guided by human insight and empathy, such tools can enrich rather than hollow out our design processes. It's a collaborative, synergistic approach, rather than a parasitic one.

To realize this future, we must meet several conditions:

Our reward as designers and users will be digital experiences that are truly responsive, intuitive, and delightful. The goal is not to eliminate the human touch in design, but to amplify it, creating interfaces that feel both cutting-edge and warmly familiar.

As we move forward, the key will be to harness the power of generative interfaces responsibly, always keeping the needs and comfort of the end user at the forefront of our design processes. This is where the future of interface design lies. AI won't replace us, but we won't have to do everything by hand. We can enjoy a balance between human intuition and machine efficiency.

"We're going to use AI to democratize things" has practically become a rallying cry, but no one ever specifies what that's supposed to mean. Well, here it is. It means that everyone can participate, and everyone can have a say... but without stepping on each other's toes or disregarding the wisdom and advice of the experts. Rather, it will give a whole new meaning to the phrase "Now you're speaking my language!

As designers, developers, and innovators, our role is clear: to use these new tools responsibly, always keeping the end-user experience at the heart of our efforts. In doing so, we can create digital experiences that are not just functional, but truly transformative - improving the way we interact with technology and, by extension, the world around us.

As we stand on the cusp of a new era in UX/UI design, it's clear that generative interfaces and dynamic user experiences (DUX) offer immense potential. However, their true power lies not in replacing human designers, but in augmenting their creativity and bridging communication gaps.

The future of interface design is neither purely AI-driven nor exclusively human-created. Instead, it's a synergy of the two - a balance between human intuition and machine efficiency. Generative UI tools, when seen as translators, can democratize the design process, allowing non-designers to better articulate their visions and collaborate more effectively with design professionals.

With its block-like modularity and adaptive nature, DUX promises interfaces that are both rich in functionality and elegantly simple. But as we embrace these technologies, we must remain mindful. The challenges of privacy, technical limitations, user customization, and accessibility demand our attention and creative problem-solving.

Our goal should be to create interfaces that not only delight users with their adaptability, but also respect their privacy, accommodate their diverse needs, and earn their trust.

By maintaining a human-centered approach and using AI as a powerful tool rather than a replacement for human creativity, we can usher in a new golden age of interface design. One where technology seamlessly adapts to human needs, rather than people adapting to technology.

As designers, developers, and innovators, our task is clear: to use these new tools responsibly, always keeping the end-user experience at the heart of our efforts.

In doing so, we can create digital experiences that are not just functional, but truly transformative – enhancing the way we interact with technology and, by extension, with the world around us.

The future of UI design is here, and it's a collaborative effort between human and machine. Let's embrace it, shape it, and ensure it serves to make our digital world more intuitive, accessible, and delightful for all.

As we look to this future, we must ask ourselves: How can we best leverage AI tools to enhance our design processes while maintaining the human touch that gives our creations soul? And how can we ensure that as our interfaces become more dynamic and personalized, they also become more inclusive and respectful of user privacy?

A lot to come, exciting!

Perspectives

Industry insights, company updates, and groundbreaking achievements. Stories that drive Hypersolid forward.